Terminal Uncertainty

Why the Market Is Repricing the Wrong Thing in the Right Way by the Wrong Amount

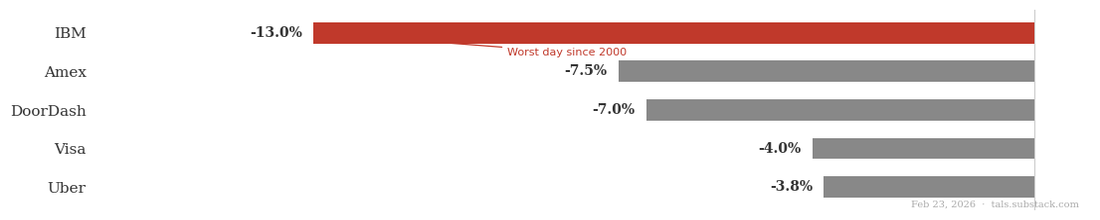

Last weekend a research firm called Citrini published a piece that moved markets. By Monday it had dragged DoorDash, Visa, IBM, Uber, and American Express down anywhere from 4 to 13 percent. IBM had its worst single day in 25 years. By Tuesday, Ben Thompson — who writes Stratechery — had published a detailed rebuttal. By Wednesday the tech world had largely decided the whole thing was irrational panic, another AI doomer piece that got more attention than it deserved.

I think that dismissal is too easy. But so is the doomer take.

The Citrini piece was doing two things at once. The first was painting a picture of broader economic dystopia — a world where AI concentrates gains at the top, hollows out the middle, and leaves workers and consumers with less leverage than they have today. This is a legitimate anxiety. The piece articulates it viscerally, which is why it went viral. It’s tapping into something real or that feels real about where the current trajectory leads if you follow it forward without assuming things adapt.

The second thing the piece was doing was supporting that vision by making specific analytical claims about specific businesses — DoorDash, Visa, real estate, credit cards — and arguing that AI will destroy or meaningfully impair them. Here is where it gets sloppy in the ways Ben correctly identified. It treats these companies as pure rent extractors, as if their entire existence is a tax on human weakness, ignoring that they were built to solve real problems and hold their positions through the affirmative choice of everyone who uses them. But Ben’s rebuttal doesn’t fully assuage the fears driving the selloff, which isn’t whether these companies created value or whether network effects are real. But rather concerns about what moats are actually made of — which parts are durable, which compress, and which evaporate entirely; and our growing uncertainty about the answers to those questions in a potentially very new era.

Every Moat Is a Stack

Markets were previously pricing companies, particularly tech companies, as if their moats were largely monolithic and durable. But they aren’t, at least not uniformly, and that mispricing is what the current selloff — for all its noise and panic — is slowly and imprecisely trying to correct. I say imprecisely, because the markets are generally treating all moats the same, even though most are a stack of three distinct layers that AI affects in completely different ways, on different timelines, through different mechanisms.

Layer 1: The Physics Floor

The bottom layer is the part that's genuinely AI-resistant, not because AI isn't powerful enough but because the constraint isn't informational. It's physical. And more specifically, it's the accumulated physical infrastructure built to operate within real world constraints at scale. A fulfillment network of hundreds of warehouses, positioned precisely to minimize last-mile distance to every major population center, takes decades and tens of billions of dollars to build. A fleet of delivery vehicles, a network of charging depots, a logistics operation capable of moving millions of packages a day with sub-24-hour reliability — none of that gets replicated by a better algorithm or a well-funded startup in a reasonable timeframe. The physical world has a different kind of barrier than the informational one: you can't rapidly iterate your way to a national warehouse footprint. You have to build it. That accumulated physical scale is what AI can't touch, at least not directly, and it sets a real floor under companies whose moats are grounded in it — and no floor at all under companies whose businesses are purely informational.

Layer 2: Coordination Overhead

Above that sits the organizational cost of managing complexity across many nodes, either physical or informational: matching, routing, pricing, trust, settlement, data accumulation. This is the Coasian core of why platforms exist in the first place, and it represents real value creation that’s easy to take for granted because the platforms have been around long enough to make it invisible. Think about what food delivery actually looked like before aggregators. You wanted Thai food at 9pm. You needed to know which restaurants in your area even delivered, find a phone number, call and hope someone picked up, read your credit card number aloud to a stranger, and then wait with no tracking, no recourse if the order was wrong, and no visibility into whether a driver existed. The friction wasn’t just inconvenient — it was a real cost that limited the size of the market. DoorDash didn’t just take a cut of transactions that were already happening. It created transactions that couldn’t happen before, by collapsing the coordination overhead that made them impossible.

This layer is more complicated than it looks, because it contains four distinct mechanisms that matter for very different reasons.

The first, and most durable, is indirect network effects — where more users on one side attract more suppliers on the other, and the suppliers are what actually improve the product. More customers on DoorDash means more restaurants join means better selection. More buyers on Amazon means more sellers join means more options and more competitive pricing. The value isn’t the users themselves — it’s what their presence attracts. The supply side builds up into something real and independent.

The second is direct network effects — where the product gets more valuable to you directly because more people use it. More people on WhatsApp means more people you can message. More people on Twitter means a larger conversation. The value flows directly from user to user, which means the value is the concentration itself. This is powerful — but it’s a different kind of moat than the first, for reasons that matter a lot in an agent world.

The third is data accumulation. A lot of what we call coordination quality is really accumulated data that compounds over time — DoorDash’s routing is better because it has more routing history, its fraud detection better because it has more transaction history. This is real quality earned through scale, not manufactured friction. But it behaves differently than it looks, for reasons I’ll get to.

The fourth is constructed switching costs — the deliberately engineered friction that some moats are built on rather than real coordination achievements. API integrations that are painful to migrate. Data formats that don’t export cleanly. Contractual lock-ins. Onboarding costs amortized over years that make leaving feel expensive even when staying is suboptimal. Unlike the other mechanisms, this one isn’t solving a coordination problem.

Layer 3: Default Capture

Default capture is monetizing the fact that humans are finite. Attention-constrained. Habitual. Susceptible to defaults. Willing to pay a worse price to avoid four more clicks. Incapable of optimally processing all available information before making a decision, so they use shortcuts — and platforms that plant themselves in the path of those shortcuts extract enormous value as a result. Think about what’s actually happening when you open DoorDash because you’re hungry. You’re not running a rational comparison across every available food delivery option, evaluating price, speed, selection, and driver availability in real time. You’re opening the app that’s already on your phone because you’ve used it before, because the activation cost of doing something different is higher than the cost of just ordering again. That behavioral groove is worth an enormous amount of money. It drives reorder rates, it justifies higher take rates, it means you don’t notice when the service fee goes up two dollars. The product works fine, sure, but the margin isn’t coming from the product being fine. It’s coming from you not really looking.

The same dynamic runs through almost every dominant consumer platform. Your credit card sits in your Apple Wallet not because you did a rigorous analysis of every card’s reward structure last Tuesday, but because you set it up years ago and the friction of changing it is higher than the value of optimizing it. You almost certainly have a streaming service you’re not using. A subscription you forgot to cancel. A default browser you never switched. These aren’t irrational behaviors — they’re entirely rational given the costs of attention and the value of time. But they generate enormous rents for the platforms that captured them.

Most successful platforms are a stack of all three. The Physics Floor is often the foundation. Coordination Overhead is the legitimate business of reducing friction. Default Capture is the bonus margin layer on top — real, substantial, and almost never separated out from the others in how we value these companies.

How AI Hits the Stack

Layer 1: Still Standing, For Now

As mentioned above, the Physics Floor is the one layer AI can’t touch directly — not today, anyway. The constraints are material, not informational, and software alone doesn’t dissolve them. But there’s a long-run caveat worth naming. Self-driving vehicles are already operating commercially in several cities. Humanoid robots are moving from research labs to factory floors. The specific physical constraints that make delivery, logistics, and location-based services AI-resistant today — a driver needs to be somewhere, food needs to travel a distance, a hand needs to pick something up — are exactly the constraints a sufficiently capable AI powered robotic layer would dissolve. I’m not going to go deep on that thesis here, but it changes the shape of the long-run argument. The floor has a trapdoor. It opens slowly. But it’s there.

Layer 2: Four Mechanisms, Four Exposure Profiles

Indirect network effects are the most durable part of Layer 2. The supply side they built up — more restaurants, more drivers, more sellers, more options — is real value that an AI agent would independently value and have difficulty replacing. More drivers on DoorDash means faster pickup times in a given zip code. More sellers on Amazon means more competitive pricing on a given product. An agent evaluating these platforms measures the same underlying quality a human was using as a proxy. The users were a means to an end. This moat converts in an AI world.

Direct network effects are more exposed. Humans need to concentrate on one platform because search and coordination are expensive — you go where you expect others to be because finding out where everyone actually is costs time and attention you don’t have, and so does everyone else. An agent doesn’t have that problem. It queries all platforms simultaneously at essentially zero marginal cost and routes to actual density directly. The coordination premium — the value sustained not by underlying quality but by shared expectation of concentration — compresses. And the erosion isn’t linear. Direct network effects are self-reinforcing until they aren’t: the shared expectation holds until enough participants defect, at which point it fragments suddenly rather than gradually. Slow until it’s sudden.

Data accumulation is more nuanced. Agents generate more data faster, which could compound the incumbent’s advantage — but they’re also more willing to route across multiple platforms, fragmenting the transaction history that makes the advantage real. And data advantages may have diminishing returns. Routing algorithms don’t need ten times more data to be ten times better. The incumbent’s advantage plateaus faster than the scale story may suggest.

Constructed switching costs face the simplest and fastest exposure. An agent that can automate migration, normalize data formats, and evaluate total switching cost across a multi-year horizon removes the friction that made this real. What’s left is only whatever genuine coordination value existed underneath it, which is often less than it looked.

Layer 3: Losing the Tax Base

Default Capture is where AI’s impact is most precise — and most misunderstood. Introduce an agent into this picture. Not a smarter human — a fundamentally different kind of economic actor. An agent has no home screen. It has no muscle memory. It didn’t set up a default card three years ago and forget about it. It has no concept of “I always order from here.” Every decision it makes is evaluated fresh against available options at essentially zero search cost. The agent isn’t a more diligent consumer. It’s a consumer that was never susceptible to the specific human limitations that Default Capture was designed to monetize. Which means this layer doesn’t compress gradually. It loses its tax base. There’s no product improvement or pricing adjustment that fixes this — you can’t make yourself stickier to a consumer that was never sticky by nature. It’s not that AI makes a better DoorDash or a cheaper Visa. It’s that AI removes from the transaction the very human properties that a significant portion of the margin depended on.

In summary, not every moat simply dies in an agent world. Some convert. The same advantage a human arrived at via habit or cognitive shortcut may be arrived at by an agent via direct rational evaluation — re-derived from first principles by a more efficient evaluator, and in some cases perhaps emerges actually stronger, because agents are better at identifying real quality than humans using mental shortcuts ever were. Others evaporate. Strip away the human psychology and there’s nothing underneath. The friction was the product. The habit was the moat.

The principle that separates them: moats convert when they are measures of real underlying quality themselves, i.e. more dense supply chains, or are rational shortcuts to real underlying quality that an agent can evaluate directly and independently arrive at. They evaporate when the shortcut was the entire product — when there’s no quality signal beneath the inertia, the habit, or the friction. If your moat was pointing at something real, agents find it faster and more reliably. If your moat was the pointing itself or the difficulty of point to begin with, you’re in trouble.

The Cases

Apply this framework to the companies Citrini and Ben were arguing about and those most affected by the Citrini selloff and you get a view where the selloff is both rational and likely overblown, depending entirely on which layer you’re looking at and for which company.

DoorDash: The Right Poster Child, For the Wrong Reasons

DoorDash has no Physics Floor. The physical constraints — food cools as it travels, drivers occupy specific locations — are real, but DoorDash doesn’t own or control any of them. The drivers are independent contractors. The restaurants are independent businesses. DoorDash is a pure coordination layer sitting on top of physical assets it doesn’t hold. That matters enormously for how you think about the moat, because it means the entire moat is Layer 2 and Layer 3 — both of which AI applies pressure to directly.

Layer 2 is complicated, because the mechanisms don’t all face the same pressure and the bull case is right about some of them. The indirect network effects are the most durable part: real restaurant density and real driver density are advantages an agent independently values and arrives at. More selection and faster pickup times are objectively better regardless of who’s evaluating them. That converts. The data accumulation advantage in routing, fraud detection, and restaurant relationships is real quality earned through scale, though subject to diminishing returns and fragmentation as agents route across multiple platforms. That partially converts.

The direct network effects are where the bull case gets too comfortable. DoorDash’s coordination lock operates on three sides simultaneously — drivers concentrate on DoorDash because customers are there, customers are there because drivers are there, restaurants join because both are there. That’s a genuinely powerful dynamic, and harder to dislodge than a one-sided lock. The bull case leans on this correctly. But direct network effects are exactly what agents undermine — humans needed to concentrate on one platform because search was expensive and coordination failure was costly. Agents don’t have that problem. Customer-facing agents compare platforms in real time. Driver-facing agents optimize across DoorDash, Uber Eats, and Grubhub simultaneously, flowing to wherever demand is highest. Restaurant management systems with agent capabilities list and manage orders across all platforms with minimal friction. When all three sides are being optimized by agents simultaneously, the concentration dynamic that made the direct network effects self-reinforcing starts to look more like a liquid market than a locked one. What remains is the underlying indirect network effect value stripped of the coordination premium that was sitting on top of it.

That coordination function itself still has real value — someone has to aggregate the supply, match it to demand, handle payments, manage disputes, and maintain the infrastructure that lets all those agents transact in the first place. DoorDash as a coordination layer for agent-driven supply and demand is still a real business. But it’s a different business, and the pre-selloff multiple was pricing the direct network effect premium as if it would persist as strongly. It won’t.

Which brings us to Default Capture, which is substantially exposed. The “app on your home screen when you’re hungry” moment — the entire behavioral architecture that generates habitual reorders and default choices — doesn’t exist for an agent. Apply the conversion test: is the habit pointing at real underlying quality an agent would independently arrive at? Partially — DoorDash’s selection and speed are real. But the margin premium above what the quality alone would support likely is not. That part evaporates. Moreover, supposed constructed switching costs — saved preferences, integrated loyalty programs, default payment methods — are essentially Default Capture wearing a Layer 2 costume, and face the same pressure.

The fair verdict sits between both camps. Citrini was wrong that DoorDash is a pure rent extractor — the indirect network effects are real and convert cleanly, and the underlying coordination achievement was genuine value creation. Ben is right that it isn’t. But the bull case conflates direct and indirect network effects, treating the coordination premium as permanent when it’s precisely what agents dissolve. The moat converts in part and compresses in part. The honest answer is: there’s a real business here, with real network density that survives the transition. It’s just worth less than the bull case is currently acknowledging.

Visa: Almost No Floor

Visa has no Physics Floor either. Settlement finality gets cited as a physics-adjacent property — there’s a real moment of irrevocable clearing — but it’s institutional and regulatory infrastructure, not physical assets. No warehouses. No trucks. No machinery. The floor is theoretical at best, and for the purposes of this analysis, essentially absent.

Layer 2 deserves more careful treatment than the doomer analysis gave it. The global rails Visa built — trust infrastructure across thousands of banks, cross-border currency handling, real-time authorization across billions of transactions — represented decades of genuine coordination work. The fraud detection operation in particular is worth taking seriously: Visa’s models run across its entire global network simultaneously, seeing transaction patterns across every merchant category, every geography, every card type in real time. No individual fintech has that cross-network view, and the breadth of that signal is genuinely different in kind from what any single issuer or challenger can replicate. The gap has narrowed — every major fintech has built capable fraud models — but Visa’s scale provides a signal depth that hasn’t been fully commoditized.

But look at which Layer 2 mechanisms are actually doing the work. Visa’s network effect is almost entirely direct — merchants accept Visa because consumers carry it, consumers carry it because merchants accept it. The value is the concentration itself, not anything the participants built up. An agent evaluating payment rails doesn’t treat universal acceptance as a proxy for safety or convenience. It evaluates cost, reliability, and settlement terms directly, and finds the major networks increasingly interchangeable on those dimensions. There’s no indirect network effect underneath to fall back on — no supply side that got better because more people showed up. The coordination premium is under active attack from ACH improvements, stablecoin settlement, and a decade of fintech disaggregation that predates AI entirely. And the constructed switching costs — embedded billing relationships, card-on-file infrastructure, credential ubiquity — are the most Default-Capture-like part of the stack, and agents optimize them away by default.

The Default Capture layer is vast and almost entirely exposed. Points inertia. Default cards from 2019 still attached to subscriptions because no one got around to updating them. Brand familiarity that feels like safety without being meaningfully safer. A rational agent evaluating payment rails finds functional equivalence among the major networks and optimizes for cost and reliability. The fraud detection advantage is real but not sufficient to sustain the premium Visa charges — it’s a narrowing gap, not a widening one. The behavioral substrate that sustained Visa’s pricing power has no agent analog and converts to nothing. Citrini was right to flag Visa if a bit overzealous.

IBM: When the Moat Is the Migration Cost

IBM has no Physics Floor — it’s a services and software business, and nothing about its core offering is materially constrained. But IBM’s Layer 2 is built around the deepest constructed switching costs of any company in this analysis, and that distinction matters enormously for how you think about its exposure.

The proximate cause of IBM’s worst single day since 2000 was Anthropic announcing that Claude Code can modernize COBOL — the 67-year-old programming language that runs critical infrastructure at banks, governments, and airlines, almost all of it on IBM mainframes. The market read this as an existential threat to IBM’s installed base along the lines of the Citrini article. That reading makes the exact mispricing error this framework is designed to identify. Code translation is not mainframe migration. As IBM itself said in response: translating COBOL is the easy part. The real work is data architecture redesign, runtime replacement, transaction processing integrity, and hardware-accelerated performance built over decades of tight software and hardware coupling. A major bank migrating off IBM mainframes isn’t a software project — it’s a multi-year, nine-figure undertaking with meaningful probability of serious operational failure at every stage. The constructed switching costs don’t live at the code layer. They live everywhere below it.

Now if AI agents can now do the consulting work that mainframe migration requires, doesn’t that lower the switching cost and expose the mainframe business too? Partially — migration becomes cheaper if the labor cost compresses. But the architectural complexity that makes migration genuinely dangerous isn’t consultant-dependent. Consultants were managing that complexity, not creating it. The risk of catastrophic failure during a major bank’s core systems migration doesn’t go away because the project manager is an AI. If anything, IBM’s own track record here is telling: it has been selling AI COBOL modernization tools since 2023, and despite that, just reported its highest mainframe revenue in 20 years. The installed base was already absorbing this threat. The market priced an acceleration of something that had already been happening slowly and wasn’t moving the needle.

There’s also a Default Capture layer on the mainframe side. “Nobody ever got fired for buying IBM” is one of the most durable corporate Default Capture mechanisms ever constructed — decades of enterprise procurement defaulting to IBM infrastructure not because it was rigorously re-evaluated each cycle but because it was the safe choice, the defensible one, the one that distributed blame in the event of failure. An AI agent embedded in enterprise procurement doesn’t have a career to protect — it evaluates on cost, capability, and fit. But this cuts differently in the enterprise than it does for consumer platforms. The CIO defaulting to IBM mainframes isn’t doing so purely out of inertia — the underlying quality is genuinely hard to replicate, and the habit is pointing at something real. That’s the kind of Default Capture that converts rather than evaporates. Strip away the inertia and the rational case for the infrastructure often survives on its own merits.

But IBM isn’t just infrastructure, and this is where the analysis splits in exactly the way the selloff didn’t. A significant portion of IBM’s revenue is consulting and services — knowledge work sold at a premium, wrapped in IBM’s brand and long-term enterprise relationships. The moat here is different in kind: not Default Capture but constructed switching costs in the form of embedded relationships, ongoing engagements, and institutional knowledge that becomes expensive to replace mid-project. The problem is that those switching costs protect the relationship, not the underlying work. AI eating knowledge work is not an abstract threat to IBM’s consulting business. It is the business. The analysts, the project managers, the COBOL modernization specialists — these are exactly the roles AI agents are designed to replace or substantially compress. When the work itself gets repriced, the relationship lock matters less, because you’re not replacing IBM with a competitor, you’re replacing IBM’s people with agents. The constructed switching costs don’t protect against that substitution the way they protect against migration.

The verdict is genuinely split in a way the selloff missed entirely. The infrastructure and mainframe business has deep switching costs pointing at real quality, reinforced by Default Capture that is itself pointing at something real — that converts slowly and durably, and the COBOL panic was an overreaction. The consulting and services business is directly in the path of AI eating knowledge work, with constructed switching costs that protect the relationship but not the labor — that’s substantially exposed, and on a faster timeline. IBM’s challenge isn’t that agents will migrate off its mainframes. It’s that agents will do the work its consultants were charging for. Those are different threats on different timelines, and the market priced them as the same thing.

What the Selloff Is Actually About

For a long time, markets had a stable model of the world. Platforms with network effects and scale advantages compound toward monopoly. Default Capture generates durable margin because human behavior is sticky. The winners of the last twenty years stay winners because the moats are self-reinforcing. You could disagree about growth rates or multiples but the basic shape of who wins and why was legible and broadly agreed upon. That consensus got baked into terminal value assumptions across a generation of DCF models and comps.

What’s being repriced now isn’t just the specific exposure of DoorDash or Visa or IBM. It’s the stability of the consensus itself. We are entering a period where the durability of any given moat is genuinely unclear — not because every moat is broken, but because the framework for evaluating moat durability just got much harder to agree on. Some moats convert. Some evaporate. Some split cleanly between business lines others don’t. The analysis is more complex, the outcomes are more dispersed, and critically, being right about a company’s actual moat quality isn’t sufficient — you also need to be right about what other investors believe, and on what timeline they figure it out.

That second-order uncertainty is what’s doing most of the work in the selloff. Markets aren’t just pricing lower expected terminal values. They’re pricing wider distributions around those terminal values — more variance, less consensus, longer time to resolution. In a world where the winners were stable and legible, you got paid for identifying them early. In a world where stability itself is the thing under pressure, the premium for that identification compresses even if the underlying quality of the assets hasn’t changed yet. The repricing is rational and real. So is the imprecision. We are in the part of the transition where the old map is clearly wrong and the new one hasn’t been drawn.

Really sharp breakdown of the moat stack framework. The convert vs. evaporate distinction is the clearest thing I've read on this selloff, and tbh I'd never cleanly separated indirect from direct network effects this way before. What makes it stick is how it exposes constucted switching costs as Default Capture wearing a Layer 2 costume, something I've seen misread constantly in valuation anlysis. The IBM split verdict is genuinely underrated.